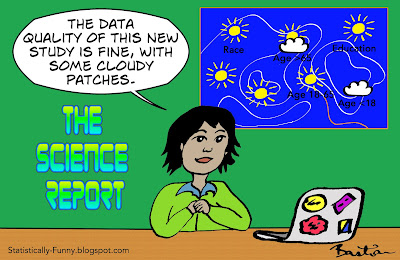

Imagine if weather reports only gave the expected average temperature across a whole country. You wouldn't want to be counting on that information when you were packing for a trip to Alaska or Hawaii, would you?

Yet that's what reports about the strength of scientific results typically do. They will give you some indication of how "good" the whole study is: and leave you with the misleading impression that the "goodness" applies to every result.

Of course, there are some quality criteria that apply to the whole of a study, and affect everything in it. Say I send out a survey to 100 people and only 20 people fill it in. That low response rate affects the study as a whole.

You can't just think about the quality of a study, though. You have to think about the quality of each result within that study. The likelihood is, the reliability of data will vary a lot.

For example, that imaginary survey could find that 25% of people said yes, they ate ice cream every week last month. That's going to be more reliable data than the answer to a question about how many times a week they ate ice cream 10 years ago. And it's likely to be less reliable than their answers to the question, "What year were you born?"

Then there's the question of missing data. Recently I wrote about bias in studies on the careers of women and men in science. A major data set people often analyze is a survey of people awarded PhDs in the United States. Around 90% of people answer it.

But within that, the rate of missing data for marital status can be around 10%, while questions on children can go unanswered 4 times as often. Conclusions based on what proportion of people with PhDs in physics are professors will be more reliable than conclusions on how many people with both PhDs in physics and school-age children are professors.

One of the most misleading areas of all for this are the abstracts and news reports of meta-analyses and systematic reviews. It will often sound really impressive: they'll tell you how many studies, and maybe how many people are in them, too. You could get the impression then, that this means all the results they tell you about have that weight behind them. The standard-setting group behind systematic review reporting says you shouldn't do that: you should make it clear with each result. (Disclosure: I was part of that group).

This is a really big deal. It's unusual for every single study to ask exactly the same questions, and gather exactly the same data, in exactly the same way. And of course that's what you need to be able to pool their answers into a single result. So the results of meta-analyses very often draw on a subset of the studies. It might be a big subset, but it might be tiny.

To show you the problem, I did a search this morning at the New York Times for "meta-analysis". I picked the first example of a journalist reporting on specific results of a meta-analysis of health studies. It's this one: about whether being overweight or obese affects your chances of surviving breast cancer. Here's what the journalist, Roni Caryn Rabin wrote - and it's very typical:

"Just two years ago, a meta-analysis crunched the numbers from more than 80 studies involving more than 200,000 women with breast cancer, and reported that women who were obese when diagnosed had a 41 percent greater risk of death, while women who were overweight but whose body mass index was under 30 had a 7 percent greater risk".

There really was not much of a chance that all the studies had data on that - even though you would be forgiven for thinking that when you looked at the abstract. And sure enough, this is how it works out when you dig in:

- There were 82 studies and the authors ran 31 basic meta-analyses;

- The meta-analytic result with the most studies in it included 24 out of the 82;

- 84% of those results combined 20 or fewer studies - and 58% had 10 or less. Sometimes only 1 or 2 studies had data on a question;

- The 2 results the New York Times reported came from about 25% of the studies and less than 20% of the women with breast cancer.

The risk data given in the study's abstract and the New York Times report did not come from "more than 200,000 women with breast cancer". One came from over 42,000 women and the other from over 44,000. In this case, still a lot. Often, it doesn't work that out way, though.

So be very careful when you think, "this is a good study". That's a big trap. It's not just that all studies aren't equally reliable. The strength and quality of evidence almost always varies within a study.

Want to read more about this?

Here's an overview of the GRADE system for grading the strength of evidence about the effects of health care.

I've written more about why it's risky to judge a study by its abstract at Absolutely Maybe.

And here's my quick introduction to meta-analysis.

Good post.

ReplyDeleteThanks!

DeleteStatistics are like a bikini. What they reveal is suggestive, but what they conceal is vital. -Aaron Levenstein

ReplyDeleteI got to your blog while I was literally looking for something statistically funny, you picked an awesome blog name.

I have not been able to confirm if your blog is statistically funny, while I do find it funny myself I am just a sample, and I am biased as I am drawn to statistics.

So I decided to run a test, took one of your cartoons and shared it in facebook, the results after 1 hour: It was liked by an artist, a engineer, a sociologist, and a system administrator loved it.

The sample is small but I have a feeling there may be a trend. Have you run tests against your site to see if its statistically funny? How big is your sample? What are the methods used?

If you do you can count me and 4 of my friends on the ones that consider it funny group. :D

Aw thanks, sanxiago! Made my day! I thought the name was an awful lot to live up to, but it was too good a name not to go for it anyway.

Delete